In 2026, clinical trial data management has shifted from basic data entry to a strategic role that influences study success and regulatory approval. With trials now producing vast amounts of data from various sources, traditional methods are no longer sufficient. The focus has transitioned to intelligent, secure, and scalable data management strategies for effective clinical development.

- Strategy 1: AI-First Data Processing and Cleaning

Challenge : Manual data cleaning is a linear, labor-intensive, and error-prone process. Phase III trials can yield over 2 million data points, necessitating hundreds of hours for query generation, resolution, and reconciliation. Consequently, data is often outdated by the time it is deemed “clean.”

Solution: AI-powered data processing automates the entire data lifecycle, utilizing machine learning models to execute various tasks.

- Automated discrepancy detection uses algorithms to compare data from EDC, ePRO, wearables, and central labs in real time, identifying inconsistencies before they escalate into queries.

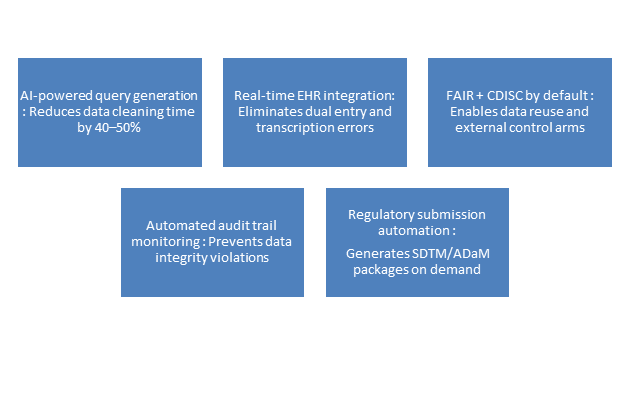

- AI enhances query generation by proposing specific, context-aware questions for sites, leading to a reduction in back-and-forth communication by 40–50%.

Each data point is assigned a confidence score, allowing high-risk data to be prioritized for human review, whereas low-risk data proceeds directly to analysis.

- Strategy 2: Real-Time Data Capture and Integration

Challenge :Traditional data management follows a batch model—data is entered at sites, aggregated periodically, cleaned in batches, and locked weeks after last patient visit. This approach cannot support adaptive trials, risk-based monitoring, or real-time safety surveillance.

Solution: Real-time data capture shifts from batch to streaming. Key components include:

- Direct integration between EHR and EDC via FHIR APIs enables automatic data transfer from hospital electronic health records to trial databases, thus removing the need for dual entry and reducing transcription errors.

- Devices continuously transmit physiological data such as heart rate, activity, sleep, and glucose to cloud platforms with sub-minute latency, facilitating near-real-time safety monitoring.

- Automated data reconciliation employs streaming algorithms that continuously align data from various sources, promptly identifying time-stamp mismatches and unit discrepancies.

- Strategy 3: FAIR Data Principles and Interoperability

Challenge: Automated data reconciliation employs streaming algorithms that continuously align data from various sources, promptly identifying time-stamp mismatches and unit discrepancies.

Solution: The FAIR principles—-Findable, Accessible, Interoperable, Reusable—have transitioned from being aspirational in academia to becoming essential regulatory requirements for effective data management by 2026.

- Standardized data models advocate for the use of CDISC formats (SDTM, ADaM) as the default approach, emphasizing that data should be transformed into these formats continuously throughout the trial, rather than just at the end.

- API-first architecture enables secure APIs from data stores for authorized systems to access data directly, eliminating the need for custom extract-transform-load pipelines.

- Metadata-driven design allows for variable definitions, permissible values, and derivation rules to be stored as machine-readable metadata, instead of being included in PDF data definition documents.

- Strategy 4: Data Integrity by Design (Not by Inspection)

Challenge: Data integrity failures will be the top compliance risk in 2026, as traditional methods address integrity as a post-event quality control, relying on reactive investigation of discrepancies.

Solution: Embed integrity controls at the point of data creation:

- Source data verification (SDV) 2.0 utilizes risk-based algorithms to target specific data points for verification, emphasizing high-risk fields and critical endpoints, rather than verifying all data. AI models enhance this process by learning from previous inspection results to focus verification efforts more effectively.

- AI conducts automated monitoring of audit trails, detecting suspicious activities such as backdating, after-hours entries, and unusual deletion rates, while promptly notifying data managers.

- Blockchain enhances chain-of-custody for high-risk data by providing tamper-evident and time-stamped records that are accepted by regulators as audit-ready.

- Strategy 5: Cloud-Native Data Platforms

Challenge: On-premise and hybrid data management systems struggle to handle the demands of modern clinical trials due to slow data transfers, high storage costs, and complex disaster recovery processes.

Solution: Cloud-native platforms built for clinical research:

- Unified data Storage: All trial data is stored in a single cloud repository that supports schema-on-read capabilities, ensuring raw data is permanently preserved and allowing for on-demand generation of analysis views.

- Elastic compute: When high processing power is needed for statistical analysis or machine learning, the platform activates numerous compute nodes for extended periods and then scales down to zero, charging sponsors only for the compute seconds utilized.

- Built-in compliance: Leading platforms comply with 21 CFR Part 11, GDPR, and HIPAA, featuring automated backup, disaster recovery, and data residency controls.

- Strategy 6: Adaptive Data Management for Decentralized Trials

Challenge: Decentralized clinical trials (DCTs) gather data from various sources, including home health visits, local labs, direct-to-patient shipping, and telehealth platforms, each presenting unique data formats, timeliness, and quality characteristics.

Solution: Adaptive data management that flexes with trial design:

- Dynamic data source configuration enables automatic provisioning of ingestion pipelines, validation rules, and storage schemas by the data management system whenever new DCT elements, like a wearable device, are added, eliminating the need for manual coding.

- Participants can temporarily allow access to their electronic health record (EHR) data, wearable streams, or smartphone location data via privacy-preserving consent dashboards, ensuring data managers do not encounter raw identifiable information.

- Real-time compliance monitoring ensures that the system monitors data completeness for each patient and site, providing automated alerts when a participant’s data contribution is insufficient for two consecutive days.

The 2026 Data Management Checklist

For sponsors and CROs building smart data management capabilities, here is the essential checklist:

Several emerging trends will shape data management in the coming years:

- Synthetic data generation involves AI models that produce privacy-preserving synthetic patient data. This method aids in algorithm training and regulatory simulations, thereby lessening dependence on actual patient data for non-interventional applications.

- Federated learning allows for models to be trained across diverse sponsor data sets while preserving the confidentiality of sensitive data, as it does not require the movement of raw data.

- Advancements in quantum computing necessitate the adoption of post-quantum cryptography in data management systems to secure long-term patient data archives.

Smart clinical trial data management in 2026 emphasizes rethinking the entire data lifecycle through real-time capture, AI cleaning, FAIR principles, and cloud-native platforms. Sponsors viewing data management as a strategic asset will achieve faster enrollment and quicker database locking, improving regulatory navigation. Conversely, those relying on outdated, manual approaches will struggle as competitors advance rapidly.

Looking to modernize your clinical trial data management? Contact CurexBio to discuss how our smart data strategies can accelerate your next study.